diff --git a/docker/test/fuzzer/run-fuzzer.sh b/docker/test/fuzzer/run-fuzzer.sh

index 5cda0831a84..05cc92ee040 100755

--- a/docker/test/fuzzer/run-fuzzer.sh

+++ b/docker/test/fuzzer/run-fuzzer.sh

@@ -122,6 +122,23 @@ EOL

$PWD

EOL

+

+ # Setup a cluster for logs export to ClickHouse Cloud

+ # Note: these variables are provided to the Docker run command by the Python script in tests/ci

+ if [ -n "${CLICKHOUSE_CI_LOGS_HOST}" ]

+ then

+ echo "

+remote_servers:

+ system_logs_export:

+ shard:

+ replica:

+ secure: 1

+ user: ci

+ host: '${CLICKHOUSE_CI_LOGS_HOST}'

+ port: 9440

+ password: '${CLICKHOUSE_CI_LOGS_PASSWORD}'

+" > db/config.d/system_logs_export.yaml

+ fi

}

function filter_exists_and_template

@@ -223,7 +240,22 @@ quit

done

clickhouse-client --query "select 1" # This checks that the server is responding

kill -0 $server_pid # This checks that it is our server that is started and not some other one

- echo Server started and responded

+ echo 'Server started and responded'

+

+ # Initialize export of system logs to ClickHouse Cloud

+ if [ -n "${CLICKHOUSE_CI_LOGS_HOST}" ]

+ then

+ export EXTRA_COLUMNS_EXPRESSION="$PR_TO_TEST AS pull_request_number, '$SHA_TO_TEST' AS commit_sha, '$CHECK_START_TIME' AS check_start_time, '$CHECK_NAME' AS check_name, '$INSTANCE_TYPE' AS instance_type"

+ # TODO: Check if the password will appear in the logs.

+ export CONNECTION_PARAMETERS="--secure --user ci --host ${CLICKHOUSE_CI_LOGS_HOST} --password ${CLICKHOUSE_CI_LOGS_PASSWORD}"

+

+ /setup_export_logs.sh

+

+ # Unset variables after use

+ export CONNECTION_PARAMETERS=''

+ export CLICKHOUSE_CI_LOGS_HOST=''

+ export CLICKHOUSE_CI_LOGS_PASSWORD=''

+ fi

# SC2012: Use find instead of ls to better handle non-alphanumeric filenames. They are all alphanumeric.

# SC2046: Quote this to prevent word splitting. Actually I need word splitting.

diff --git a/docs/_includes/install/universal.sh b/docs/_includes/install/universal.sh

index 5d4571aed9e..0ae77f464eb 100755

--- a/docs/_includes/install/universal.sh

+++ b/docs/_includes/install/universal.sh

@@ -36,6 +36,9 @@ then

elif [ "${ARCH}" = "riscv64" ]

then

DIR="riscv64"

+ elif [ "${ARCH}" = "s390x" ]

+ then

+ DIR="s390x"

fi

elif [ "${OS}" = "FreeBSD" ]

then

diff --git a/docs/en/engines/table-engines/mergetree-family/annindexes.md b/docs/en/engines/table-engines/mergetree-family/annindexes.md

index 5944048f6c3..81c69215472 100644

--- a/docs/en/engines/table-engines/mergetree-family/annindexes.md

+++ b/docs/en/engines/table-engines/mergetree-family/annindexes.md

@@ -1,4 +1,4 @@

-# Approximate Nearest Neighbor Search Indexes [experimental] {#table_engines-ANNIndex}

+# Approximate Nearest Neighbor Search Indexes [experimental]

Nearest neighborhood search is the problem of finding the M closest points for a given point in an N-dimensional vector space. The most

straightforward approach to solve this problem is a brute force search where the distance between all points in the vector space and the

@@ -17,7 +17,7 @@ In terms of SQL, the nearest neighborhood problem can be expressed as follows:

``` sql

SELECT *

-FROM table

+FROM table_with_ann_index

ORDER BY Distance(vectors, Point)

LIMIT N

```

@@ -32,7 +32,7 @@ An alternative formulation of the nearest neighborhood search problem looks as f

``` sql

SELECT *

-FROM table

+FROM table_with_ann_index

WHERE Distance(vectors, Point) < MaxDistance

LIMIT N

```

@@ -45,12 +45,12 @@ With brute force search, both queries are expensive (linear in the number of poi

`Point` must be computed. To speed this process up, Approximate Nearest Neighbor Search Indexes (ANN indexes) store a compact representation

of the search space (using clustering, search trees, etc.) which allows to compute an approximate answer much quicker (in sub-linear time).

-# Creating and Using ANN Indexes

+# Creating and Using ANN Indexes {#creating_using_ann_indexes}

Syntax to create an ANN index over an [Array](../../../sql-reference/data-types/array.md) column:

```sql

-CREATE TABLE table

+CREATE TABLE table_with_ann_index

(

`id` Int64,

`vectors` Array(Float32),

@@ -63,7 +63,7 @@ ORDER BY id;

Syntax to create an ANN index over a [Tuple](../../../sql-reference/data-types/tuple.md) column:

```sql

-CREATE TABLE table

+CREATE TABLE table_with_ann_index

(

`id` Int64,

`vectors` Tuple(Float32[, Float32[, ...]]),

@@ -83,7 +83,7 @@ ANN indexes support two types of queries:

``` sql

SELECT *

- FROM table

+ FROM table_with_ann_index

[WHERE ...]

ORDER BY Distance(vectors, Point)

LIMIT N

@@ -93,7 +93,7 @@ ANN indexes support two types of queries:

``` sql

SELECT *

- FROM table

+ FROM table_with_ann_index

WHERE Distance(vectors, Point) < MaxDistance

LIMIT N

```

@@ -103,7 +103,7 @@ To avoid writing out large vectors, you can use [query

parameters](/docs/en/interfaces/cli.md#queries-with-parameters-cli-queries-with-parameters), e.g.

```bash

-clickhouse-client --param_vec='hello' --query="SELECT * FROM table WHERE L2Distance(vectors, {vec: Array(Float32)}) < 1.0"

+clickhouse-client --param_vec='hello' --query="SELECT * FROM table_with_ann_index WHERE L2Distance(vectors, {vec: Array(Float32)}) < 1.0"

```

:::

@@ -138,7 +138,7 @@ back to a smaller `GRANULARITY` values only in case of problems like excessive m

was specified for ANN indexes, the default value is 100 million.

-# Available ANN Indexes

+# Available ANN Indexes {#available_ann_indexes}

- [Annoy](/docs/en/engines/table-engines/mergetree-family/annindexes.md#annoy-annoy)

@@ -165,7 +165,7 @@ space in random linear surfaces (lines in 2D, planes in 3D etc.).

Syntax to create an Annoy index over an [Array](../../../sql-reference/data-types/array.md) column:

```sql

-CREATE TABLE table

+CREATE TABLE table_with_annoy_index

(

id Int64,

vectors Array(Float32),

@@ -178,7 +178,7 @@ ORDER BY id;

Syntax to create an ANN index over a [Tuple](../../../sql-reference/data-types/tuple.md) column:

```sql

-CREATE TABLE table

+CREATE TABLE table_with_annoy_index

(

id Int64,

vectors Tuple(Float32[, Float32[, ...]]),

@@ -188,23 +188,17 @@ ENGINE = MergeTree

ORDER BY id;

```

-Annoy currently supports `L2Distance` and `cosineDistance` as distance function `Distance`. If no distance function was specified during

-index creation, `L2Distance` is used as default. Parameter `NumTrees` is the number of trees which the algorithm creates (default if not

-specified: 100). Higher values of `NumTree` mean more accurate search results but slower index creation / query times (approximately

-linearly) as well as larger index sizes.

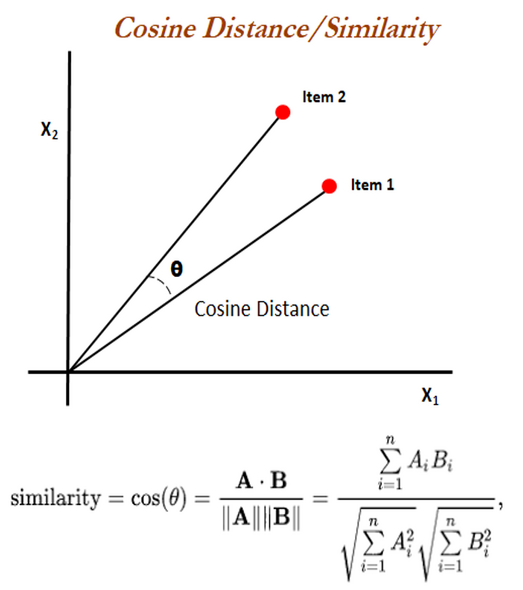

+Annoy currently supports two distance functions:

+- `L2Distance`, also called Euclidean distance, is the length of a line segment between two points in Euclidean space

+ ([Wikipedia](https://en.wikipedia.org/wiki/Euclidean_distance)).

+- `cosineDistance`, also called cosine similarity, is the cosine of the angle between two (non-zero) vectors

+ ([Wikipedia](https://en.wikipedia.org/wiki/Cosine_similarity)).

-`L2Distance` is also called Euclidean distance, the Euclidean distance between two points in Euclidean space is the length of a line segment between the two points.

-For example: If we have point P(p1,p2), Q(q1,q2), their distance will be d(p,q)

-

+For normalized data, `L2Distance` is usually a better choice, otherwise `cosineDistance` is recommended to compensate for scale. If no

+distance function was specified during index creation, `L2Distance` is used as default.

-`cosineDistance` also called cosine similarity is a measure of similarity between two non-zero vectors defined in an inner product space. Cosine similarity is the cosine of the angle between the vectors; that is, it is the dot product of the vectors divided by the product of their lengths.

-

-

-The Euclidean distance corresponds to the L2-norm of a difference between vectors. The cosine similarity is proportional to the dot product of two vectors and inversely proportional to the product of their magnitudes.

-

-In one sentence: cosine similarity care only about the angle between them, but do not care about the "distance" we normally think.

-

-

+Parameter `NumTrees` is the number of trees which the algorithm creates (default if not specified: 100). Higher values of `NumTree` mean

+more accurate search results but slower index creation / query times (approximately linearly) as well as larger index sizes.

:::note

Indexes over columns of type `Array` will generally work faster than indexes on `Tuple` columns. All arrays **must** have same length. Use

diff --git a/docs/en/interfaces/formats.md b/docs/en/interfaces/formats.md

index 0d1308afc4d..e2122380510 100644

--- a/docs/en/interfaces/formats.md

+++ b/docs/en/interfaces/formats.md

@@ -11,82 +11,83 @@ results of a `SELECT`, and to perform `INSERT`s into a file-backed table.

The supported formats are:

| Format | Input | Output |

-|-------------------------------------------------------------------------------------------|------|--------|

-| [TabSeparated](#tabseparated) | ✔ | ✔ |

-| [TabSeparatedRaw](#tabseparatedraw) | ✔ | ✔ |

-| [TabSeparatedWithNames](#tabseparatedwithnames) | ✔ | ✔ |

-| [TabSeparatedWithNamesAndTypes](#tabseparatedwithnamesandtypes) | ✔ | ✔ |

-| [TabSeparatedRawWithNames](#tabseparatedrawwithnames) | ✔ | ✔ |

-| [TabSeparatedRawWithNamesAndTypes](#tabseparatedrawwithnamesandtypes) | ✔ | ✔ |

-| [Template](#format-template) | ✔ | ✔ |

-| [TemplateIgnoreSpaces](#templateignorespaces) | ✔ | ✗ |

-| [CSV](#csv) | ✔ | ✔ |

-| [CSVWithNames](#csvwithnames) | ✔ | ✔ |

-| [CSVWithNamesAndTypes](#csvwithnamesandtypes) | ✔ | ✔ |

-| [CustomSeparated](#format-customseparated) | ✔ | ✔ |

-| [CustomSeparatedWithNames](#customseparatedwithnames) | ✔ | ✔ |

-| [CustomSeparatedWithNamesAndTypes](#customseparatedwithnamesandtypes) | ✔ | ✔ |

-| [SQLInsert](#sqlinsert) | ✗ | ✔ |

-| [Values](#data-format-values) | ✔ | ✔ |

-| [Vertical](#vertical) | ✗ | ✔ |

-| [JSON](#json) | ✔ | ✔ |

-| [JSONAsString](#jsonasstring) | ✔ | ✗ |

-| [JSONStrings](#jsonstrings) | ✔ | ✔ |

-| [JSONColumns](#jsoncolumns) | ✔ | ✔ |

-| [JSONColumnsWithMetadata](#jsoncolumnsmonoblock)) | ✔ | ✔ |

-| [JSONCompact](#jsoncompact) | ✔ | ✔ |

-| [JSONCompactStrings](#jsoncompactstrings) | ✗ | ✔ |

-| [JSONCompactColumns](#jsoncompactcolumns) | ✔ | ✔ |

-| [JSONEachRow](#jsoneachrow) | ✔ | ✔ |

-| [PrettyJSONEachRow](#prettyjsoneachrow) | ✗ | ✔ |

-| [JSONEachRowWithProgress](#jsoneachrowwithprogress) | ✗ | ✔ |

-| [JSONStringsEachRow](#jsonstringseachrow) | ✔ | ✔ |

-| [JSONStringsEachRowWithProgress](#jsonstringseachrowwithprogress) | ✗ | ✔ |

-| [JSONCompactEachRow](#jsoncompacteachrow) | ✔ | ✔ |

-| [JSONCompactEachRowWithNames](#jsoncompacteachrowwithnames) | ✔ | ✔ |

-| [JSONCompactEachRowWithNamesAndTypes](#jsoncompacteachrowwithnamesandtypes) | ✔ | ✔ |

-| [JSONCompactStringsEachRow](#jsoncompactstringseachrow) | ✔ | ✔ |

-| [JSONCompactStringsEachRowWithNames](#jsoncompactstringseachrowwithnames) | ✔ | ✔ |

-| [JSONCompactStringsEachRowWithNamesAndTypes](#jsoncompactstringseachrowwithnamesandtypes) | ✔ | ✔ |

-| [JSONObjectEachRow](#jsonobjecteachrow) | ✔ | ✔ |

-| [BSONEachRow](#bsoneachrow) | ✔ | ✔ |

-| [TSKV](#tskv) | ✔ | ✔ |

-| [Pretty](#pretty) | ✗ | ✔ |

-| [PrettyNoEscapes](#prettynoescapes) | ✗ | ✔ |

-| [PrettyMonoBlock](#prettymonoblock) | ✗ | ✔ |

-| [PrettyNoEscapesMonoBlock](#prettynoescapesmonoblock) | ✗ | ✔ |

-| [PrettyCompact](#prettycompact) | ✗ | ✔ |

-| [PrettyCompactNoEscapes](#prettycompactnoescapes) | ✗ | ✔ |

-| [PrettyCompactMonoBlock](#prettycompactmonoblock) | ✗ | ✔ |

-| [PrettyCompactNoEscapesMonoBlock](#prettycompactnoescapesmonoblock) | ✗ | ✔ |

-| [PrettySpace](#prettyspace) | ✗ | ✔ |

-| [PrettySpaceNoEscapes](#prettyspacenoescapes) | ✗ | ✔ |

-| [PrettySpaceMonoBlock](#prettyspacemonoblock) | ✗ | ✔ |

-| [PrettySpaceNoEscapesMonoBlock](#prettyspacenoescapesmonoblock) | ✗ | ✔ |

-| [Prometheus](#prometheus) | ✗ | ✔ |

-| [Protobuf](#protobuf) | ✔ | ✔ |

-| [ProtobufSingle](#protobufsingle) | ✔ | ✔ |

-| [Avro](#data-format-avro) | ✔ | ✔ |

-| [AvroConfluent](#data-format-avro-confluent) | ✔ | ✗ |

-| [Parquet](#data-format-parquet) | ✔ | ✔ |

-| [ParquetMetadata](#data-format-parquet-metadata) | ✔ | ✗ |

-| [Arrow](#data-format-arrow) | ✔ | ✔ |

-| [ArrowStream](#data-format-arrow-stream) | ✔ | ✔ |

-| [ORC](#data-format-orc) | ✔ | ✔ |

-| [RowBinary](#rowbinary) | ✔ | ✔ |

-| [RowBinaryWithNames](#rowbinarywithnamesandtypes) | ✔ | ✔ |

-| [RowBinaryWithNamesAndTypes](#rowbinarywithnamesandtypes) | ✔ | ✔ |

-| [RowBinaryWithDefaults](#rowbinarywithdefaults) | ✔ | ✔ |

-| [Native](#native) | ✔ | ✔ |

-| [Null](#null) | ✗ | ✔ |

-| [XML](#xml) | ✗ | ✔ |

-| [CapnProto](#capnproto) | ✔ | ✔ |

-| [LineAsString](#lineasstring) | ✔ | ✔ |

-| [Regexp](#data-format-regexp) | ✔ | ✗ |

-| [RawBLOB](#rawblob) | ✔ | ✔ |

-| [MsgPack](#msgpack) | ✔ | ✔ |

-| [MySQLDump](#mysqldump) | ✔ | ✗ |

-| [Markdown](#markdown) | ✗ | ✔ |

+|-------------------------------------------------------------------------------------------|------|-------|

+| [TabSeparated](#tabseparated) | ✔ | ✔ |

+| [TabSeparatedRaw](#tabseparatedraw) | ✔ | ✔ |

+| [TabSeparatedWithNames](#tabseparatedwithnames) | ✔ | ✔ |

+| [TabSeparatedWithNamesAndTypes](#tabseparatedwithnamesandtypes) | ✔ | ✔ |

+| [TabSeparatedRawWithNames](#tabseparatedrawwithnames) | ✔ | ✔ |

+| [TabSeparatedRawWithNamesAndTypes](#tabseparatedrawwithnamesandtypes) | ✔ | ✔ |

+| [Template](#format-template) | ✔ | ✔ |

+| [TemplateIgnoreSpaces](#templateignorespaces) | ✔ | ✗ |

+| [CSV](#csv) | ✔ | ✔ |

+| [CSVWithNames](#csvwithnames) | ✔ | ✔ |

+| [CSVWithNamesAndTypes](#csvwithnamesandtypes) | ✔ | ✔ |

+| [CustomSeparated](#format-customseparated) | ✔ | ✔ |

+| [CustomSeparatedWithNames](#customseparatedwithnames) | ✔ | ✔ |

+| [CustomSeparatedWithNamesAndTypes](#customseparatedwithnamesandtypes) | ✔ | ✔ |

+| [SQLInsert](#sqlinsert) | ✗ | ✔ |

+| [Values](#data-format-values) | ✔ | ✔ |

+| [Vertical](#vertical) | ✗ | ✔ |

+| [JSON](#json) | ✔ | ✔ |

+| [JSONAsString](#jsonasstring) | ✔ | ✗ |

+| [JSONStrings](#jsonstrings) | ✔ | ✔ |

+| [JSONColumns](#jsoncolumns) | ✔ | ✔ |

+| [JSONColumnsWithMetadata](#jsoncolumnsmonoblock)) | ✔ | ✔ |

+| [JSONCompact](#jsoncompact) | ✔ | ✔ |

+| [JSONCompactStrings](#jsoncompactstrings) | ✗ | ✔ |

+| [JSONCompactColumns](#jsoncompactcolumns) | ✔ | ✔ |

+| [JSONEachRow](#jsoneachrow) | ✔ | ✔ |

+| [PrettyJSONEachRow](#prettyjsoneachrow) | ✗ | ✔ |

+| [JSONEachRowWithProgress](#jsoneachrowwithprogress) | ✗ | ✔ |

+| [JSONStringsEachRow](#jsonstringseachrow) | ✔ | ✔ |

+| [JSONStringsEachRowWithProgress](#jsonstringseachrowwithprogress) | ✗ | ✔ |

+| [JSONCompactEachRow](#jsoncompacteachrow) | ✔ | ✔ |

+| [JSONCompactEachRowWithNames](#jsoncompacteachrowwithnames) | ✔ | ✔ |

+| [JSONCompactEachRowWithNamesAndTypes](#jsoncompacteachrowwithnamesandtypes) | ✔ | ✔ |

+| [JSONCompactStringsEachRow](#jsoncompactstringseachrow) | ✔ | ✔ |

+| [JSONCompactStringsEachRowWithNames](#jsoncompactstringseachrowwithnames) | ✔ | ✔ |

+| [JSONCompactStringsEachRowWithNamesAndTypes](#jsoncompactstringseachrowwithnamesandtypes) | ✔ | ✔ |

+| [JSONObjectEachRow](#jsonobjecteachrow) | ✔ | ✔ |

+| [BSONEachRow](#bsoneachrow) | ✔ | ✔ |

+| [TSKV](#tskv) | ✔ | ✔ |

+| [Pretty](#pretty) | ✗ | ✔ |

+| [PrettyNoEscapes](#prettynoescapes) | ✗ | ✔ |

+| [PrettyMonoBlock](#prettymonoblock) | ✗ | ✔ |

+| [PrettyNoEscapesMonoBlock](#prettynoescapesmonoblock) | ✗ | ✔ |

+| [PrettyCompact](#prettycompact) | ✗ | ✔ |

+| [PrettyCompactNoEscapes](#prettycompactnoescapes) | ✗ | ✔ |

+| [PrettyCompactMonoBlock](#prettycompactmonoblock) | ✗ | ✔ |

+| [PrettyCompactNoEscapesMonoBlock](#prettycompactnoescapesmonoblock) | ✗ | ✔ |

+| [PrettySpace](#prettyspace) | ✗ | ✔ |

+| [PrettySpaceNoEscapes](#prettyspacenoescapes) | ✗ | ✔ |

+| [PrettySpaceMonoBlock](#prettyspacemonoblock) | ✗ | ✔ |

+| [PrettySpaceNoEscapesMonoBlock](#prettyspacenoescapesmonoblock) | ✗ | ✔ |

+| [Prometheus](#prometheus) | ✗ | ✔ |

+| [Protobuf](#protobuf) | ✔ | ✔ |

+| [ProtobufSingle](#protobufsingle) | ✔ | ✔ |

+| [Avro](#data-format-avro) | ✔ | ✔ |

+| [AvroConfluent](#data-format-avro-confluent) | ✔ | ✗ |

+| [Parquet](#data-format-parquet) | ✔ | ✔ |

+| [ParquetMetadata](#data-format-parquet-metadata) | ✔ | ✗ |

+| [Arrow](#data-format-arrow) | ✔ | ✔ |

+| [ArrowStream](#data-format-arrow-stream) | ✔ | ✔ |

+| [ORC](#data-format-orc) | ✔ | ✔ |

+| [One](#data-format-one) | ✔ | ✗ |

+| [RowBinary](#rowbinary) | ✔ | ✔ |

+| [RowBinaryWithNames](#rowbinarywithnamesandtypes) | ✔ | ✔ |

+| [RowBinaryWithNamesAndTypes](#rowbinarywithnamesandtypes) | ✔ | ✔ |

+| [RowBinaryWithDefaults](#rowbinarywithdefaults) | ✔ | ✔ |

+| [Native](#native) | ✔ | ✔ |

+| [Null](#null) | ✗ | ✔ |

+| [XML](#xml) | ✗ | ✔ |

+| [CapnProto](#capnproto) | ✔ | ✔ |

+| [LineAsString](#lineasstring) | ✔ | ✔ |

+| [Regexp](#data-format-regexp) | ✔ | ✗ |

+| [RawBLOB](#rawblob) | ✔ | ✔ |

+| [MsgPack](#msgpack) | ✔ | ✔ |

+| [MySQLDump](#mysqldump) | ✔ | ✗ |

+| [Markdown](#markdown) | ✗ | ✔ |

You can control some format processing parameters with the ClickHouse settings. For more information read the [Settings](/docs/en/operations/settings/settings-formats.md) section.

@@ -2131,6 +2132,7 @@ To exchange data with Hadoop, you can use [HDFS table engine](/docs/en/engines/t

- [output_format_parquet_row_group_size](/docs/en/operations/settings/settings-formats.md/#output_format_parquet_row_group_size) - row group size in rows while data output. Default value - `1000000`.

- [output_format_parquet_string_as_string](/docs/en/operations/settings/settings-formats.md/#output_format_parquet_string_as_string) - use Parquet String type instead of Binary for String columns. Default value - `false`.

+- [input_format_parquet_import_nested](/docs/en/operations/settings/settings-formats.md/#input_format_parquet_import_nested) - allow inserting array of structs into [Nested](/docs/en/sql-reference/data-types/nested-data-structures/index.md) table in Parquet input format. Default value - `false`.

- [input_format_parquet_case_insensitive_column_matching](/docs/en/operations/settings/settings-formats.md/#input_format_parquet_case_insensitive_column_matching) - ignore case when matching Parquet columns with ClickHouse columns. Default value - `false`.

- [input_format_parquet_allow_missing_columns](/docs/en/operations/settings/settings-formats.md/#input_format_parquet_allow_missing_columns) - allow missing columns while reading Parquet data. Default value - `false`.

- [input_format_parquet_skip_columns_with_unsupported_types_in_schema_inference](/docs/en/operations/settings/settings-formats.md/#input_format_parquet_skip_columns_with_unsupported_types_in_schema_inference) - allow skipping columns with unsupported types while schema inference for Parquet format. Default value - `false`.

@@ -2407,6 +2409,34 @@ $ clickhouse-client --query="SELECT * FROM {some_table} FORMAT ORC" > {filename.

To exchange data with Hadoop, you can use [HDFS table engine](/docs/en/engines/table-engines/integrations/hdfs.md).

+## One {#data-format-one}

+

+Special input format that doesn't read any data from file and returns only one row with column of type `UInt8`, name `dummy` and value `0` (like `system.one` table).

+Can be used with virtual columns `_file/_path` to list all files without reading actual data.

+

+Example:

+

+Query:

+```sql

+SELECT _file FROM file('path/to/files/data*', One);

+```

+

+Result:

+```text

+┌─_file────┐

+│ data.csv │

+└──────────┘

+┌─_file──────┐

+│ data.jsonl │

+└────────────┘

+┌─_file────┐

+│ data.tsv │

+└──────────┘

+┌─_file────────┐

+│ data.parquet │

+└──────────────┘

+```

+

## LineAsString {#lineasstring}

In this format, every line of input data is interpreted as a single string value. This format can only be parsed for table with a single field of type [String](/docs/en/sql-reference/data-types/string.md). The remaining columns must be set to [DEFAULT](/docs/en/sql-reference/statements/create/table.md/#default) or [MATERIALIZED](/docs/en/sql-reference/statements/create/table.md/#materialized), or omitted.

diff --git a/docs/en/interfaces/third-party/integrations.md b/docs/en/interfaces/third-party/integrations.md

index 3e1b1e84f5d..a9f1af93495 100644

--- a/docs/en/interfaces/third-party/integrations.md

+++ b/docs/en/interfaces/third-party/integrations.md

@@ -83,8 +83,8 @@ ClickHouse, Inc. does **not** maintain the tools and libraries listed below and

- Python

- [SQLAlchemy](https://www.sqlalchemy.org)

- [sqlalchemy-clickhouse](https://github.com/cloudflare/sqlalchemy-clickhouse) (uses [infi.clickhouse_orm](https://github.com/Infinidat/infi.clickhouse_orm))

- - [pandas](https://pandas.pydata.org)

- - [pandahouse](https://github.com/kszucs/pandahouse)

+ - [PyArrow/Pandas](https://pandas.pydata.org)

+ - [Ibis](https://github.com/ibis-project/ibis)

- PHP

- [Doctrine](https://www.doctrine-project.org/)

- [dbal-clickhouse](https://packagist.org/packages/friendsofdoctrine/dbal-clickhouse)

diff --git a/docs/en/sql-reference/statements/insert-into.md b/docs/en/sql-reference/statements/insert-into.md

index d6e30827f9b..e0cc98c2351 100644

--- a/docs/en/sql-reference/statements/insert-into.md

+++ b/docs/en/sql-reference/statements/insert-into.md

@@ -11,7 +11,7 @@ Inserts data into a table.

**Syntax**

``` sql

-INSERT INTO [db.]table [(c1, c2, c3)] VALUES (v11, v12, v13), (v21, v22, v23), ...

+INSERT INTO [TABLE] [db.]table [(c1, c2, c3)] VALUES (v11, v12, v13), (v21, v22, v23), ...

```

You can specify a list of columns to insert using the `(c1, c2, c3)`. You can also use an expression with column [matcher](../../sql-reference/statements/select/index.md#asterisk) such as `*` and/or [modifiers](../../sql-reference/statements/select/index.md#select-modifiers) such as [APPLY](../../sql-reference/statements/select/index.md#apply-modifier), [EXCEPT](../../sql-reference/statements/select/index.md#except-modifier), [REPLACE](../../sql-reference/statements/select/index.md#replace-modifier).

@@ -107,7 +107,7 @@ If table has [constraints](../../sql-reference/statements/create/table.md#constr

**Syntax**

``` sql

-INSERT INTO [db.]table [(c1, c2, c3)] SELECT ...

+INSERT INTO [TABLE] [db.]table [(c1, c2, c3)] SELECT ...

```

Columns are mapped according to their position in the SELECT clause. However, their names in the SELECT expression and the table for INSERT may differ. If necessary, type casting is performed.

@@ -126,7 +126,7 @@ To insert a default value instead of `NULL` into a column with not nullable data

**Syntax**

``` sql

-INSERT INTO [db.]table [(c1, c2, c3)] FROM INFILE file_name [COMPRESSION type] FORMAT format_name

+INSERT INTO [TABLE] [db.]table [(c1, c2, c3)] FROM INFILE file_name [COMPRESSION type] FORMAT format_name

```

Use the syntax above to insert data from a file, or files, stored on the **client** side. `file_name` and `type` are string literals. Input file [format](../../interfaces/formats.md) must be set in the `FORMAT` clause.

diff --git a/docs/ru/sql-reference/statements/insert-into.md b/docs/ru/sql-reference/statements/insert-into.md

index 4fa6ac4ce66..747e36b8809 100644

--- a/docs/ru/sql-reference/statements/insert-into.md

+++ b/docs/ru/sql-reference/statements/insert-into.md

@@ -11,7 +11,7 @@ sidebar_label: INSERT INTO

**Синтаксис**

``` sql

-INSERT INTO [db.]table [(c1, c2, c3)] VALUES (v11, v12, v13), (v21, v22, v23), ...

+INSERT INTO [TABLE] [db.]table [(c1, c2, c3)] VALUES (v11, v12, v13), (v21, v22, v23), ...

```

Вы можете указать список столбцов для вставки, используя синтаксис `(c1, c2, c3)`. Также можно использовать выражение cо [звездочкой](../../sql-reference/statements/select/index.md#asterisk) и/или модификаторами, такими как [APPLY](../../sql-reference/statements/select/index.md#apply-modifier), [EXCEPT](../../sql-reference/statements/select/index.md#except-modifier), [REPLACE](../../sql-reference/statements/select/index.md#replace-modifier).

@@ -100,7 +100,7 @@ INSERT INTO t FORMAT TabSeparated

**Синтаксис**

``` sql

-INSERT INTO [db.]table [(c1, c2, c3)] SELECT ...

+INSERT INTO [TABLE] [db.]table [(c1, c2, c3)] SELECT ...

```

Соответствие столбцов определяется их позицией в секции SELECT. При этом, их имена в выражении SELECT и в таблице для INSERT, могут отличаться. При необходимости выполняется приведение типов данных, эквивалентное соответствующему оператору CAST.

@@ -120,7 +120,7 @@ INSERT INTO [db.]table [(c1, c2, c3)] SELECT ...

**Синтаксис**

``` sql

-INSERT INTO [db.]table [(c1, c2, c3)] FROM INFILE file_name [COMPRESSION type] FORMAT format_name

+INSERT INTO [TABLE] [db.]table [(c1, c2, c3)] FROM INFILE file_name [COMPRESSION type] FORMAT format_name

```

Используйте этот синтаксис, чтобы вставить данные из файла, который хранится на стороне **клиента**. `file_name` и `type` задаются в виде строковых литералов. [Формат](../../interfaces/formats.md) входного файла должен быть задан в секции `FORMAT`.

diff --git a/docs/zh/sql-reference/statements/insert-into.md b/docs/zh/sql-reference/statements/insert-into.md

index 9acc1655f9a..f80c0a8a8ea 100644

--- a/docs/zh/sql-reference/statements/insert-into.md

+++ b/docs/zh/sql-reference/statements/insert-into.md

@@ -8,7 +8,7 @@ INSERT INTO 语句主要用于向系统中添加数据.

查询的基本格式:

``` sql

-INSERT INTO [db.]table [(c1, c2, c3)] VALUES (v11, v12, v13), (v21, v22, v23), ...

+INSERT INTO [TABLE] [db.]table [(c1, c2, c3)] VALUES (v11, v12, v13), (v21, v22, v23), ...

```

您可以在查询中指定要插入的列的列表,如:`[(c1, c2, c3)]`。您还可以使用列[匹配器](../../sql-reference/statements/select/index.md#asterisk)的表达式,例如`*`和/或[修饰符](../../sql-reference/statements/select/index.md#select-modifiers),例如 [APPLY](../../sql-reference/statements/select/index.md#apply-modifier), [EXCEPT](../../sql-reference/statements/select/index.md#apply-modifier), [REPLACE](../../sql-reference/statements/select/index.md#replace-modifier)。

@@ -71,7 +71,7 @@ INSERT INTO [db.]table [(c1, c2, c3)] FORMAT format_name data_set

例如,下面的查询所使用的输入格式就与上面INSERT … VALUES的中使用的输入格式相同:

``` sql

-INSERT INTO [db.]table [(c1, c2, c3)] FORMAT Values (v11, v12, v13), (v21, v22, v23), ...

+INSERT INTO [TABLE] [db.]table [(c1, c2, c3)] FORMAT Values (v11, v12, v13), (v21, v22, v23), ...

```

ClickHouse会清除数据前所有的空白字符与一个换行符(如果有换行符的话)。所以在进行查询时,我们建议您将数据放入到输入输出格式名称后的新的一行中去(如果数据是以空白字符开始的,这将非常重要)。

@@ -93,7 +93,7 @@ INSERT INTO t FORMAT TabSeparated

### 使用`SELECT`的结果写入 {#inserting-the-results-of-select}

``` sql

-INSERT INTO [db.]table [(c1, c2, c3)] SELECT ...

+INSERT INTO [TABLE] [db.]table [(c1, c2, c3)] SELECT ...

```

写入与SELECT的列的对应关系是使用位置来进行对应的,尽管它们在SELECT表达式与INSERT中的名称可能是不同的。如果需要,会对它们执行对应的类型转换。

diff --git a/programs/install/Install.cpp b/programs/install/Install.cpp

index d7086c95beb..e10a9fea86b 100644

--- a/programs/install/Install.cpp

+++ b/programs/install/Install.cpp

@@ -997,7 +997,9 @@ namespace

{

/// sudo respects limits in /etc/security/limits.conf e.g. open files,

/// that's why we are using it instead of the 'clickhouse su' tool.

- command = fmt::format("sudo -u '{}' {}", user, command);

+ /// by default, sudo resets all the ENV variables, but we should preserve

+ /// the values /etc/default/clickhouse in /etc/init.d/clickhouse file

+ command = fmt::format("sudo --preserve-env -u '{}' {}", user, command);

}

fmt::print("Will run {}\n", command);

diff --git a/src/Client/ClientBase.cpp b/src/Client/ClientBase.cpp

index c7288d4793a..9ad6a46866f 100644

--- a/src/Client/ClientBase.cpp

+++ b/src/Client/ClientBase.cpp

@@ -105,6 +105,7 @@ namespace ErrorCodes

extern const int LOGICAL_ERROR;

extern const int CANNOT_OPEN_FILE;

extern const int FILE_ALREADY_EXISTS;

+ extern const int USER_SESSION_LIMIT_EXCEEDED;

}

}

@@ -2408,6 +2409,13 @@ void ClientBase::runInteractive()

}

}

+ if (suggest && suggest->getLastError() == ErrorCodes::USER_SESSION_LIMIT_EXCEEDED)

+ {

+ // If a separate connection loading suggestions failed to open a new session,

+ // use the main session to receive them.

+ suggest->load(*connection, connection_parameters.timeouts, config().getInt("suggestion_limit"));

+ }

+

try

{

if (!processQueryText(input))

diff --git a/src/Client/Suggest.cpp b/src/Client/Suggest.cpp

index 00e0ebd8b91..c854d471fae 100644

--- a/src/Client/Suggest.cpp

+++ b/src/Client/Suggest.cpp

@@ -22,9 +22,11 @@ namespace DB

{

namespace ErrorCodes

{

+ extern const int OK;

extern const int LOGICAL_ERROR;

extern const int UNKNOWN_PACKET_FROM_SERVER;

extern const int DEADLOCK_AVOIDED;

+ extern const int USER_SESSION_LIMIT_EXCEEDED;

}

Suggest::Suggest()

@@ -121,21 +123,24 @@ void Suggest::load(ContextPtr context, const ConnectionParameters & connection_p

}

catch (const Exception & e)

{

+ last_error = e.code();

if (e.code() == ErrorCodes::DEADLOCK_AVOIDED)

continue;

-

- /// Client can successfully connect to the server and

- /// get ErrorCodes::USER_SESSION_LIMIT_EXCEEDED for suggestion connection.

-

- /// We should not use std::cerr here, because this method works concurrently with the main thread.

- /// WriteBufferFromFileDescriptor will write directly to the file descriptor, avoiding data race on std::cerr.

-

- WriteBufferFromFileDescriptor out(STDERR_FILENO, 4096);

- out << "Cannot load data for command line suggestions: " << getCurrentExceptionMessage(false, true) << "\n";

- out.next();

+ else if (e.code() != ErrorCodes::USER_SESSION_LIMIT_EXCEEDED)

+ {

+ /// We should not use std::cerr here, because this method works concurrently with the main thread.

+ /// WriteBufferFromFileDescriptor will write directly to the file descriptor, avoiding data race on std::cerr.

+ ///

+ /// USER_SESSION_LIMIT_EXCEEDED is ignored here. The client will try to receive

+ /// suggestions using the main connection later.

+ WriteBufferFromFileDescriptor out(STDERR_FILENO, 4096);

+ out << "Cannot load data for command line suggestions: " << getCurrentExceptionMessage(false, true) << "\n";

+ out.next();

+ }

}

catch (...)

{

+ last_error = getCurrentExceptionCode();

WriteBufferFromFileDescriptor out(STDERR_FILENO, 4096);

out << "Cannot load data for command line suggestions: " << getCurrentExceptionMessage(false, true) << "\n";

out.next();

@@ -148,6 +153,21 @@ void Suggest::load(ContextPtr context, const ConnectionParameters & connection_p

});

}

+void Suggest::load(IServerConnection & connection,

+ const ConnectionTimeouts & timeouts,

+ Int32 suggestion_limit)

+{

+ try

+ {

+ fetch(connection, timeouts, getLoadSuggestionQuery(suggestion_limit, true));

+ }

+ catch (...)

+ {

+ std::cerr << "Suggestions loading exception: " << getCurrentExceptionMessage(false, true) << std::endl;

+ last_error = getCurrentExceptionCode();

+ }

+}

+

void Suggest::fetch(IServerConnection & connection, const ConnectionTimeouts & timeouts, const std::string & query)

{

connection.sendQuery(

@@ -176,6 +196,7 @@ void Suggest::fetch(IServerConnection & connection, const ConnectionTimeouts & t

return;

case Protocol::Server::EndOfStream:

+ last_error = ErrorCodes::OK;

return;

default:

diff --git a/src/Client/Suggest.h b/src/Client/Suggest.h

index cfe9315879c..5cecdc4501b 100644

--- a/src/Client/Suggest.h

+++ b/src/Client/Suggest.h

@@ -7,6 +7,7 @@

#include

#include

#include

+#include

#include

@@ -28,9 +29,15 @@ public:

template

void load(ContextPtr context, const ConnectionParameters & connection_parameters, Int32 suggestion_limit);

+ void load(IServerConnection & connection,

+ const ConnectionTimeouts & timeouts,

+ Int32 suggestion_limit);

+

/// Older server versions cannot execute the query loading suggestions.

static constexpr int MIN_SERVER_REVISION = DBMS_MIN_PROTOCOL_VERSION_WITH_VIEW_IF_PERMITTED;

+ int getLastError() const { return last_error.load(); }

+

private:

void fetch(IServerConnection & connection, const ConnectionTimeouts & timeouts, const std::string & query);

@@ -38,6 +45,8 @@ private:

/// Words are fetched asynchronously.

std::thread loading_thread;

+

+ std::atomic last_error { -1 };

};

}

diff --git a/src/Common/TransformEndianness.hpp b/src/Common/TransformEndianness.hpp

index 0a9055dde15..05f7778a12e 100644

--- a/src/Common/TransformEndianness.hpp

+++ b/src/Common/TransformEndianness.hpp

@@ -3,23 +3,25 @@

#include

#include

+#include

+

#include

namespace DB

{

-template

+template

requires std::is_integral_v

inline void transformEndianness(T & value)

{

- if constexpr (endian != std::endian::native)

+ if constexpr (ToEndian != FromEndian)

value = std::byteswap(value);

}

-template

+template

requires is_big_int_v

inline void transformEndianness(T & x)

{

- if constexpr (std::endian::native != endian)

+ if constexpr (ToEndian != FromEndian)

{

auto & items = x.items;

std::transform(std::begin(items), std::end(items), std::begin(items), [](auto & item) { return std::byteswap(item); });

@@ -27,42 +29,49 @@ inline void transformEndianness(T & x)

}

}

-template

+template

requires is_decimal

inline void transformEndianness(T & x)

{

- transformEndianness(x.value);

+ transformEndianness(x.value);

}

-template

+template

requires std::is_floating_point_v

inline void transformEndianness(T & value)

{

- if constexpr (std::endian::native != endian)

+ if constexpr (ToEndian != FromEndian)

{

auto * start = reinterpret_cast(&value);

std::reverse(start, start + sizeof(T));

}

}

-template

+template

requires std::is_scoped_enum_v

inline void transformEndianness(T & x)

{

using UnderlyingType = std::underlying_type_t;

- transformEndianness(reinterpret_cast(x));

+ transformEndianness(reinterpret_cast(x));

}

-template

+template

inline void transformEndianness(std::pair & pair)

{

- transformEndianness(pair.first);

- transformEndianness(pair.second);

+ transformEndianness(pair.first);

+ transformEndianness(pair.second);

}

-template

+template

inline void transformEndianness(StrongTypedef & x)

{

- transformEndianness(x.toUnderType());

+ transformEndianness(x.toUnderType());

+}

+

+template

+inline void transformEndianness(CityHash_v1_0_2::uint128 & x)

+{

+ transformEndianness(x.low64);

+ transformEndianness(x.high64);

}

}

diff --git a/src/Common/ZooKeeper/ZooKeeper.cpp b/src/Common/ZooKeeper/ZooKeeper.cpp

index 0fe536b1a08..10331a4e410 100644

--- a/src/Common/ZooKeeper/ZooKeeper.cpp

+++ b/src/Common/ZooKeeper/ZooKeeper.cpp

@@ -152,7 +152,7 @@ void ZooKeeper::init(ZooKeeperArgs args_)

throw KeeperException(code, "/");

if (code == Coordination::Error::ZNONODE)

- throw KeeperException("ZooKeeper root doesn't exist. You should create root node " + args.chroot + " before start.", Coordination::Error::ZNONODE);

+ throw KeeperException(Coordination::Error::ZNONODE, "ZooKeeper root doesn't exist. You should create root node {} before start.", args.chroot);

}

}

@@ -491,7 +491,7 @@ std::string ZooKeeper::get(const std::string & path, Coordination::Stat * stat,

if (tryGet(path, res, stat, watch, &code))

return res;

else

- throw KeeperException("Can't get data for node " + path + ": node doesn't exist", code);

+ throw KeeperException(code, "Can't get data for node '{}': node doesn't exist", path);

}

std::string ZooKeeper::getWatch(const std::string & path, Coordination::Stat * stat, Coordination::WatchCallback watch_callback)

@@ -501,7 +501,7 @@ std::string ZooKeeper::getWatch(const std::string & path, Coordination::Stat * s

if (tryGetWatch(path, res, stat, watch_callback, &code))

return res;

else

- throw KeeperException("Can't get data for node " + path + ": node doesn't exist", code);

+ throw KeeperException(code, "Can't get data for node '{}': node doesn't exist", path);

}

bool ZooKeeper::tryGet(

diff --git a/src/Common/ZooKeeper/ZooKeeperArgs.cpp b/src/Common/ZooKeeper/ZooKeeperArgs.cpp

index 198d4ccdea7..4c73b9ffc6d 100644

--- a/src/Common/ZooKeeper/ZooKeeperArgs.cpp

+++ b/src/Common/ZooKeeper/ZooKeeperArgs.cpp

@@ -213,7 +213,7 @@ void ZooKeeperArgs::initFromKeeperSection(const Poco::Util::AbstractConfiguratio

};

}

else

- throw KeeperException(std::string("Unknown key ") + key + " in config file", Coordination::Error::ZBADARGUMENTS);

+ throw KeeperException(Coordination::Error::ZBADARGUMENTS, "Unknown key {} in config file", key);

}

}

diff --git a/src/DataTypes/Serializations/SerializationNumber.cpp b/src/DataTypes/Serializations/SerializationNumber.cpp

index 0294a1c8a67..df6c0848bbe 100644

--- a/src/DataTypes/Serializations/SerializationNumber.cpp

+++ b/src/DataTypes/Serializations/SerializationNumber.cpp

@@ -10,6 +10,8 @@

#include

#include

+#include

+

namespace DB

{

@@ -135,13 +137,25 @@ template

void SerializationNumber::serializeBinaryBulk(const IColumn & column, WriteBuffer & ostr, size_t offset, size_t limit) const

{

const typename ColumnVector::Container & x = typeid_cast &>(column).getData();

-

- size_t size = x.size();

-

- if (limit == 0 || offset + limit > size)

+ if (const size_t size = x.size(); limit == 0 || offset + limit > size)

limit = size - offset;

- if (limit)

+ if (limit == 0)

+ return;

+

+ if constexpr (std::endian::native == std::endian::big && sizeof(T) >= 2)

+ {

+ static constexpr auto to_little_endian = [](auto i)

+ {

+ transformEndianness(i);

+ return i;

+ };

+

+ std::ranges::for_each(

+ x | std::views::drop(offset) | std::views::take(limit) | std::views::transform(to_little_endian),

+ [&ostr](const auto & i) { ostr.write(reinterpret_cast(&i), sizeof(typename ColumnVector::ValueType)); });

+ }

+ else

ostr.write(reinterpret_cast(&x[offset]), sizeof(typename ColumnVector::ValueType) * limit);

}

@@ -149,10 +163,13 @@ template

void SerializationNumber::deserializeBinaryBulk(IColumn & column, ReadBuffer & istr, size_t limit, double /*avg_value_size_hint*/) const

{

typename ColumnVector::Container & x = typeid_cast &>(column).getData();

- size_t initial_size = x.size();

+ const size_t initial_size = x.size();

x.resize(initial_size + limit);

- size_t size = istr.readBig(reinterpret_cast(&x[initial_size]), sizeof(typename ColumnVector::ValueType) * limit);

+ const size_t size = istr.readBig(reinterpret_cast(&x[initial_size]), sizeof(typename ColumnVector::ValueType) * limit);

x.resize(initial_size + size / sizeof(typename ColumnVector::ValueType));

+

+ if constexpr (std::endian::native == std::endian::big && sizeof(T) >= 2)

+ std::ranges::for_each(x | std::views::drop(initial_size), [](auto & i) { transformEndianness(i); });

}

template class SerializationNumber;

diff --git a/src/Formats/registerFormats.cpp b/src/Formats/registerFormats.cpp

index 29ef46f330f..580db61edde 100644

--- a/src/Formats/registerFormats.cpp

+++ b/src/Formats/registerFormats.cpp

@@ -101,6 +101,7 @@ void registerInputFormatJSONAsObject(FormatFactory & factory);

void registerInputFormatLineAsString(FormatFactory & factory);

void registerInputFormatMySQLDump(FormatFactory & factory);

void registerInputFormatParquetMetadata(FormatFactory & factory);

+void registerInputFormatOne(FormatFactory & factory);

#if USE_HIVE

void registerInputFormatHiveText(FormatFactory & factory);

@@ -142,6 +143,7 @@ void registerTemplateSchemaReader(FormatFactory & factory);

void registerMySQLSchemaReader(FormatFactory & factory);

void registerBSONEachRowSchemaReader(FormatFactory & factory);

void registerParquetMetadataSchemaReader(FormatFactory & factory);

+void registerOneSchemaReader(FormatFactory & factory);

void registerFileExtensions(FormatFactory & factory);

@@ -243,6 +245,7 @@ void registerFormats()

registerInputFormatMySQLDump(factory);

registerInputFormatParquetMetadata(factory);

+ registerInputFormatOne(factory);

registerNonTrivialPrefixAndSuffixCheckerJSONEachRow(factory);

registerNonTrivialPrefixAndSuffixCheckerJSONAsString(factory);

@@ -279,6 +282,7 @@ void registerFormats()

registerMySQLSchemaReader(factory);

registerBSONEachRowSchemaReader(factory);

registerParquetMetadataSchemaReader(factory);

+ registerOneSchemaReader(factory);

}

}

diff --git a/src/Functions/FunctionsHashing.h b/src/Functions/FunctionsHashing.h

index a6a04b4e313..fb40c59fa8a 100644

--- a/src/Functions/FunctionsHashing.h

+++ b/src/Functions/FunctionsHashing.h

@@ -1374,8 +1374,8 @@ public:

if constexpr (std::is_same_v) /// backward-compatible

{

- if (std::endian::native == std::endian::big)

- std::ranges::for_each(col_to->getData(), transformEndianness);

+ if constexpr (std::endian::native == std::endian::big)

+ std::ranges::for_each(col_to->getData(), transformEndianness);

auto col_to_fixed_string = ColumnFixedString::create(sizeof(UInt128));

const auto & data = col_to->getData();

diff --git a/src/IO/S3/Client.cpp b/src/IO/S3/Client.cpp

index 51c7ee32579..7e251dc415a 100644

--- a/src/IO/S3/Client.cpp

+++ b/src/IO/S3/Client.cpp

@@ -188,7 +188,7 @@ Client::Client(

}

}

- LOG_TRACE(log, "API mode: {}", toString(api_mode));

+ LOG_TRACE(log, "API mode of the S3 client: {}", api_mode);

detect_region = provider_type == ProviderType::AWS && explicit_region == Aws::Region::AWS_GLOBAL;

diff --git a/src/Interpreters/ClusterProxy/SelectStreamFactory.h b/src/Interpreters/ClusterProxy/SelectStreamFactory.h

index 1cc5a3b1a77..ca07fd5deda 100644

--- a/src/Interpreters/ClusterProxy/SelectStreamFactory.h

+++ b/src/Interpreters/ClusterProxy/SelectStreamFactory.h

@@ -60,9 +60,6 @@ public:

/// (When there is a local replica with big delay).

bool lazy = false;

time_t local_delay = 0;

-

- /// Set only if parallel reading from replicas is used.

- std::shared_ptr coordinator;

};

using Shards = std::vector;

diff --git a/src/Interpreters/ClusterProxy/executeQuery.cpp b/src/Interpreters/ClusterProxy/executeQuery.cpp

index 2fed626ffb7..f2d7132b174 100644

--- a/src/Interpreters/ClusterProxy/executeQuery.cpp

+++ b/src/Interpreters/ClusterProxy/executeQuery.cpp

@@ -28,7 +28,6 @@ namespace DB

namespace ErrorCodes

{

extern const int TOO_LARGE_DISTRIBUTED_DEPTH;

- extern const int LOGICAL_ERROR;

extern const int SUPPORT_IS_DISABLED;

}

@@ -281,7 +280,6 @@ void executeQueryWithParallelReplicas(

auto all_replicas_count = std::min(static_cast(settings.max_parallel_replicas), new_cluster->getShardCount());

auto coordinator = std::make_shared(all_replicas_count);

auto remote_plan = std::make_unique();

- auto plans = std::vector();

/// This is a little bit weird, but we construct an "empty" coordinator without

/// any specified reading/coordination method (like Default, InOrder, InReverseOrder)

@@ -309,20 +307,7 @@ void executeQueryWithParallelReplicas(

&Poco::Logger::get("ReadFromParallelRemoteReplicasStep"),

query_info.storage_limits);

- remote_plan->addStep(std::move(read_from_remote));

- remote_plan->addInterpreterContext(context);

- plans.emplace_back(std::move(remote_plan));

-

- if (std::all_of(plans.begin(), plans.end(), [](const QueryPlanPtr & plan) { return !plan; }))

- throw Exception(ErrorCodes::LOGICAL_ERROR, "No plans were generated for reading from shard. This is a bug");

-

- DataStreams input_streams;

- input_streams.reserve(plans.size());

- for (const auto & plan : plans)

- input_streams.emplace_back(plan->getCurrentDataStream());

-

- auto union_step = std::make_unique(std::move(input_streams));

- query_plan.unitePlans(std::move(union_step), std::move(plans));

+ query_plan.addStep(std::move(read_from_remote));

}

}

diff --git a/src/Interpreters/InterpreterSelectQueryAnalyzer.cpp b/src/Interpreters/InterpreterSelectQueryAnalyzer.cpp

index 8db1d27c073..b8cace5e0ad 100644

--- a/src/Interpreters/InterpreterSelectQueryAnalyzer.cpp

+++ b/src/Interpreters/InterpreterSelectQueryAnalyzer.cpp

@@ -184,7 +184,7 @@ InterpreterSelectQueryAnalyzer::InterpreterSelectQueryAnalyzer(

, context(buildContext(context_, select_query_options_))

, select_query_options(select_query_options_)

, query_tree(query_tree_)

- , planner(query_tree_, select_query_options_)

+ , planner(query_tree_, select_query_options)

{

}

diff --git a/src/Interpreters/Session.cpp b/src/Interpreters/Session.cpp

index f8bd70afdb6..bcfaae40a03 100644

--- a/src/Interpreters/Session.cpp

+++ b/src/Interpreters/Session.cpp

@@ -299,6 +299,7 @@ Session::~Session()

if (notified_session_log_about_login)

{

+ LOG_DEBUG(log, "{} Logout, user_id: {}", toString(auth_id), toString(*user_id));

if (auto session_log = getSessionLog())

{

/// TODO: We have to ensure that the same info is added to the session log on a LoginSuccess event and on the corresponding Logout event.

@@ -320,6 +321,7 @@ AuthenticationType Session::getAuthenticationTypeOrLogInFailure(const String & u

}

catch (const Exception & e)

{

+ LOG_ERROR(log, "{} Authentication failed with error: {}", toString(auth_id), e.what());

if (auto session_log = getSessionLog())

session_log->addLoginFailure(auth_id, getClientInfo(), user_name, e);

diff --git a/src/Interpreters/executeQuery.cpp b/src/Interpreters/executeQuery.cpp

index 578ca3b41f9..a56007375f4 100644

--- a/src/Interpreters/executeQuery.cpp

+++ b/src/Interpreters/executeQuery.cpp

@@ -45,6 +45,7 @@

#include

#include

#include

+#include

#include

#include

#include

@@ -1033,6 +1034,11 @@ static std::tuple executeQueryImpl(

}

+ // InterpreterSelectQueryAnalyzer does not build QueryPlan in the constructor.

+ // We need to force to build it here to check if we need to ignore quota.

+ if (auto * interpreter_with_analyzer = dynamic_cast(interpreter.get()))

+ interpreter_with_analyzer->getQueryPlan();

+

if (!interpreter->ignoreQuota() && !quota_checked)

{

quota = context->getQuota();

diff --git a/src/Planner/Planner.cpp b/src/Planner/Planner.cpp

index 9f6c22f90f3..7cce495dfb8 100644

--- a/src/Planner/Planner.cpp

+++ b/src/Planner/Planner.cpp

@@ -1047,7 +1047,7 @@ PlannerContextPtr buildPlannerContext(const QueryTreeNodePtr & query_tree_node,

}

Planner::Planner(const QueryTreeNodePtr & query_tree_,

- const SelectQueryOptions & select_query_options_)

+ SelectQueryOptions & select_query_options_)

: query_tree(query_tree_)

, select_query_options(select_query_options_)

, planner_context(buildPlannerContext(query_tree, select_query_options, std::make_shared()))

@@ -1055,7 +1055,7 @@ Planner::Planner(const QueryTreeNodePtr & query_tree_,

}

Planner::Planner(const QueryTreeNodePtr & query_tree_,

- const SelectQueryOptions & select_query_options_,

+ SelectQueryOptions & select_query_options_,

GlobalPlannerContextPtr global_planner_context_)

: query_tree(query_tree_)

, select_query_options(select_query_options_)

@@ -1064,7 +1064,7 @@ Planner::Planner(const QueryTreeNodePtr & query_tree_,

}

Planner::Planner(const QueryTreeNodePtr & query_tree_,

- const SelectQueryOptions & select_query_options_,

+ SelectQueryOptions & select_query_options_,

PlannerContextPtr planner_context_)

: query_tree(query_tree_)

, select_query_options(select_query_options_)

diff --git a/src/Planner/Planner.h b/src/Planner/Planner.h

index 783a07f6e99..f8d151365cf 100644

--- a/src/Planner/Planner.h

+++ b/src/Planner/Planner.h

@@ -22,16 +22,16 @@ class Planner

public:

/// Initialize planner with query tree after analysis phase

Planner(const QueryTreeNodePtr & query_tree_,

- const SelectQueryOptions & select_query_options_);

+ SelectQueryOptions & select_query_options_);

/// Initialize planner with query tree after query analysis phase and global planner context

Planner(const QueryTreeNodePtr & query_tree_,

- const SelectQueryOptions & select_query_options_,

+ SelectQueryOptions & select_query_options_,

GlobalPlannerContextPtr global_planner_context_);

/// Initialize planner with query tree after query analysis phase and planner context

Planner(const QueryTreeNodePtr & query_tree_,

- const SelectQueryOptions & select_query_options_,

+ SelectQueryOptions & select_query_options_,

PlannerContextPtr planner_context_);

const QueryPlan & getQueryPlan() const

@@ -66,7 +66,7 @@ private:

void buildPlanForQueryNode();

QueryTreeNodePtr query_tree;

- SelectQueryOptions select_query_options;

+ SelectQueryOptions & select_query_options;

PlannerContextPtr planner_context;

QueryPlan query_plan;

StorageLimitsList storage_limits;

diff --git a/src/Planner/PlannerJoinTree.cpp b/src/Planner/PlannerJoinTree.cpp

index 56a48ce8328..f6ce029a295 100644

--- a/src/Planner/PlannerJoinTree.cpp

+++ b/src/Planner/PlannerJoinTree.cpp

@@ -113,6 +113,20 @@ void checkAccessRights(const TableNode & table_node, const Names & column_names,

query_context->checkAccess(AccessType::SELECT, storage_id, column_names);

}

+bool shouldIgnoreQuotaAndLimits(const TableNode & table_node)

+{

+ const auto & storage_id = table_node.getStorageID();

+ if (!storage_id.hasDatabase())

+ return false;

+ if (storage_id.database_name == DatabaseCatalog::SYSTEM_DATABASE)

+ {

+ static const boost::container::flat_set tables_ignoring_quota{"quotas", "quota_limits", "quota_usage", "quotas_usage", "one"};

+ if (tables_ignoring_quota.count(storage_id.table_name))

+ return true;

+ }

+ return false;

+}

+

NameAndTypePair chooseSmallestColumnToReadFromStorage(const StoragePtr & storage, const StorageSnapshotPtr & storage_snapshot)

{

/** We need to read at least one column to find the number of rows.

@@ -828,8 +842,9 @@ JoinTreeQueryPlan buildQueryPlanForTableExpression(QueryTreeNodePtr table_expres

}

else

{

+ SelectQueryOptions analyze_query_options = SelectQueryOptions(from_stage).analyze();

Planner planner(select_query_info.query_tree,

- SelectQueryOptions(from_stage).analyze(),

+ analyze_query_options,

select_query_info.planner_context);

planner.buildQueryPlanIfNeeded();

@@ -1375,7 +1390,7 @@ JoinTreeQueryPlan buildQueryPlanForArrayJoinNode(const QueryTreeNodePtr & array_

JoinTreeQueryPlan buildJoinTreeQueryPlan(const QueryTreeNodePtr & query_node,

const SelectQueryInfo & select_query_info,

- const SelectQueryOptions & select_query_options,

+ SelectQueryOptions & select_query_options,

const ColumnIdentifierSet & outer_scope_columns,

PlannerContextPtr & planner_context)

{

@@ -1386,6 +1401,16 @@ JoinTreeQueryPlan buildJoinTreeQueryPlan(const QueryTreeNodePtr & query_node,

std::vector table_expressions_outer_scope_columns(table_expressions_stack_size);

ColumnIdentifierSet current_outer_scope_columns = outer_scope_columns;

+ if (is_single_table_expression)

+ {

+ auto * table_node = table_expressions_stack[0]->as();

+ if (table_node && shouldIgnoreQuotaAndLimits(*table_node))

+ {

+ select_query_options.ignore_quota = true;

+ select_query_options.ignore_limits = true;

+ }

+ }

+

/// For each table, table function, query, union table expressions prepare before query plan build

for (size_t i = 0; i < table_expressions_stack_size; ++i)

{

diff --git a/src/Planner/PlannerJoinTree.h b/src/Planner/PlannerJoinTree.h

index acbc96ddae0..9d3b98175d0 100644

--- a/src/Planner/PlannerJoinTree.h

+++ b/src/Planner/PlannerJoinTree.h

@@ -20,7 +20,7 @@ struct JoinTreeQueryPlan

/// Build JOIN TREE query plan for query node

JoinTreeQueryPlan buildJoinTreeQueryPlan(const QueryTreeNodePtr & query_node,

const SelectQueryInfo & select_query_info,

- const SelectQueryOptions & select_query_options,

+ SelectQueryOptions & select_query_options,

const ColumnIdentifierSet & outer_scope_columns,

PlannerContextPtr & planner_context);

diff --git a/src/Processors/Formats/Impl/OneFormat.cpp b/src/Processors/Formats/Impl/OneFormat.cpp

new file mode 100644

index 00000000000..4a9c8caebf3

--- /dev/null

+++ b/src/Processors/Formats/Impl/OneFormat.cpp

@@ -0,0 +1,57 @@

+#include

+#include

+#include

+

+namespace DB

+{

+

+namespace ErrorCodes

+{

+ extern const int BAD_ARGUMENTS;

+}

+

+OneInputFormat::OneInputFormat(const Block & header, ReadBuffer & in_) : IInputFormat(header, &in_)

+{

+ if (header.columns() != 1)

+ throw Exception(ErrorCodes::BAD_ARGUMENTS,

+ "One input format is only suitable for tables with a single column of type UInt8 but the number of columns is {}",

+ header.columns());

+

+ if (!WhichDataType(header.getByPosition(0).type).isUInt8())

+ throw Exception(ErrorCodes::BAD_ARGUMENTS,

+ "One input format is only suitable for tables with a single column of type String but the column type is {}",

+ header.getByPosition(0).type->getName());

+}

+

+Chunk OneInputFormat::generate()

+{

+ if (done)

+ return {};

+

+ done = true;

+ auto column = ColumnUInt8::create();

+ column->insertDefault();

+ return Chunk(Columns{std::move(column)}, 1);

+}

+

+void registerInputFormatOne(FormatFactory & factory)

+{

+ factory.registerInputFormat("One", [](

+ ReadBuffer & buf,

+ const Block & sample,

+ const RowInputFormatParams &,

+ const FormatSettings &)

+ {

+ return std::make_shared(sample, buf);

+ });

+}

+

+void registerOneSchemaReader(FormatFactory & factory)

+{

+ factory.registerExternalSchemaReader("One", [](const FormatSettings &)

+ {

+ return std::make_shared();

+ });

+}

+

+}

diff --git a/src/Processors/Formats/Impl/OneFormat.h b/src/Processors/Formats/Impl/OneFormat.h

new file mode 100644

index 00000000000..f73b2dab66a

--- /dev/null

+++ b/src/Processors/Formats/Impl/OneFormat.h

@@ -0,0 +1,32 @@

+#pragma once

+#include

+#include

+#include

+

+namespace DB

+{

+

+class OneInputFormat final : public IInputFormat

+{

+public:

+ OneInputFormat(const Block & header, ReadBuffer & in_);

+

+ String getName() const override { return "One"; }

+

+protected:

+ Chunk generate() override;

+

+private:

+ bool done = false;

+};

+

+class OneSchemaReader: public IExternalSchemaReader

+{

+public:

+ NamesAndTypesList readSchema() override

+ {

+ return {{"dummy", std::make_shared()}};

+ }

+};

+

+}

diff --git a/src/Storages/MergeTree/MergeTreeData.cpp b/src/Storages/MergeTree/MergeTreeData.cpp

index db0a7b34d7e..da0a6328894 100644

--- a/src/Storages/MergeTree/MergeTreeData.cpp

+++ b/src/Storages/MergeTree/MergeTreeData.cpp

@@ -8435,7 +8435,7 @@ void MergeTreeData::incrementMergedPartsProfileEvent(MergeTreeDataPartType type)

}

}

-MergeTreeData::MutableDataPartPtr MergeTreeData::createEmptyPart(

+std::pair MergeTreeData::createEmptyPart(

MergeTreePartInfo & new_part_info, const MergeTreePartition & partition, const String & new_part_name,

const MergeTreeTransactionPtr & txn)

{

@@ -8454,6 +8454,7 @@ MergeTreeData::MutableDataPartPtr MergeTreeData::createEmptyPart(

ReservationPtr reservation = reserveSpacePreferringTTLRules(metadata_snapshot, 0, move_ttl_infos, time(nullptr), 0, true);

VolumePtr data_part_volume = createVolumeFromReservation(reservation, volume);

+ auto tmp_dir_holder = getTemporaryPartDirectoryHolder(EMPTY_PART_TMP_PREFIX + new_part_name);

auto new_data_part = getDataPartBuilder(new_part_name, data_part_volume, EMPTY_PART_TMP_PREFIX + new_part_name)

.withBytesAndRowsOnDisk(0, 0)

.withPartInfo(new_part_info)

@@ -8513,7 +8514,7 @@ MergeTreeData::MutableDataPartPtr MergeTreeData::createEmptyPart(

out.finalizePart(new_data_part, sync_on_insert);

new_data_part_storage->precommitTransaction();

- return new_data_part;

+ return std::make_pair(std::move(new_data_part), std::move(tmp_dir_holder));

}

bool MergeTreeData::allowRemoveStaleMovingParts() const

diff --git a/src/Storages/MergeTree/MergeTreeData.h b/src/Storages/MergeTree/MergeTreeData.h

index 9ee61134740..e4801cffa36 100644

--- a/src/Storages/MergeTree/MergeTreeData.h

+++ b/src/Storages/MergeTree/MergeTreeData.h

@@ -936,7 +936,9 @@ public:

WriteAheadLogPtr getWriteAheadLog();

constexpr static auto EMPTY_PART_TMP_PREFIX = "tmp_empty_";

- MergeTreeData::MutableDataPartPtr createEmptyPart(MergeTreePartInfo & new_part_info, const MergeTreePartition & partition, const String & new_part_name, const MergeTreeTransactionPtr & txn);

+ std::pair createEmptyPart(

+ MergeTreePartInfo & new_part_info, const MergeTreePartition & partition,

+ const String & new_part_name, const MergeTreeTransactionPtr & txn);

MergeTreeDataFormatVersion format_version;

diff --git a/src/Storages/MergeTree/MergeTreeDataPartChecksum.cpp b/src/Storages/MergeTree/MergeTreeDataPartChecksum.cpp

index 6628cd68eaf..5a7b2dfbca8 100644

--- a/src/Storages/MergeTree/MergeTreeDataPartChecksum.cpp

+++ b/src/Storages/MergeTree/MergeTreeDataPartChecksum.cpp

@@ -187,15 +187,15 @@ bool MergeTreeDataPartChecksums::readV3(ReadBuffer & in)

String name;

Checksum sum;

- readBinary(name, in);

+ readStringBinary(name, in);

readVarUInt(sum.file_size, in);

- readPODBinary(sum.file_hash, in);

- readBinary(sum.is_compressed, in);

+ readBinaryLittleEndian(sum.file_hash, in);

+ readBinaryLittleEndian(sum.is_compressed, in);

if (sum.is_compressed)

{

readVarUInt(sum.uncompressed_size, in);

- readPODBinary(sum.uncompressed_hash, in);

+ readBinaryLittleEndian(sum.uncompressed_hash, in);

}

files.emplace(std::move(name), sum);

@@ -223,15 +223,15 @@ void MergeTreeDataPartChecksums::write(WriteBuffer & to) const

const String & name = it.first;

const Checksum & sum = it.second;

- writeBinary(name, out);

+ writeStringBinary(name, out);

writeVarUInt(sum.file_size, out);

- writePODBinary(sum.file_hash, out);

- writeBinary(sum.is_compressed, out);

+ writeBinaryLittleEndian(sum.file_hash, out);

+ writeBinaryLittleEndian(sum.is_compressed, out);

if (sum.is_compressed)

{

writeVarUInt(sum.uncompressed_size, out);

- writePODBinary(sum.uncompressed_hash, out);

+ writeBinaryLittleEndian(sum.uncompressed_hash, out);

}

}

}

@@ -339,9 +339,9 @@ void MinimalisticDataPartChecksums::serializeWithoutHeader(WriteBuffer & to) con

writeVarUInt(num_compressed_files, to);

writeVarUInt(num_uncompressed_files, to);

- writePODBinary(hash_of_all_files, to);

- writePODBinary(hash_of_uncompressed_files, to);

- writePODBinary(uncompressed_hash_of_compressed_files, to);

+ writeBinaryLittleEndian(hash_of_all_files, to);

+ writeBinaryLittleEndian(hash_of_uncompressed_files, to);

+ writeBinaryLittleEndian(uncompressed_hash_of_compressed_files, to);

}

String MinimalisticDataPartChecksums::getSerializedString() const

@@ -382,9 +382,9 @@ void MinimalisticDataPartChecksums::deserializeWithoutHeader(ReadBuffer & in)

readVarUInt(num_compressed_files, in);

readVarUInt(num_uncompressed_files, in);

- readPODBinary(hash_of_all_files, in);

- readPODBinary(hash_of_uncompressed_files, in);

- readPODBinary(uncompressed_hash_of_compressed_files, in);

+ readBinaryLittleEndian(hash_of_all_files, in);

+ readBinaryLittleEndian(hash_of_uncompressed_files, in);

+ readBinaryLittleEndian(uncompressed_hash_of_compressed_files, in);

}

void MinimalisticDataPartChecksums::computeTotalChecksums(const MergeTreeDataPartChecksums & full_checksums_)

diff --git a/src/Storages/MergeTree/MergeTreeDataPartWriterCompact.cpp b/src/Storages/MergeTree/MergeTreeDataPartWriterCompact.cpp

index 5e1da21da5b..75e6aca0793 100644

--- a/src/Storages/MergeTree/MergeTreeDataPartWriterCompact.cpp

+++ b/src/Storages/MergeTree/MergeTreeDataPartWriterCompact.cpp

@@ -365,8 +365,9 @@ void MergeTreeDataPartWriterCompact::addToChecksums(MergeTreeDataPartChecksums &

{

uncompressed_size += stream->hashing_buf.count();

auto stream_hash = stream->hashing_buf.getHash();

+ transformEndianness(stream_hash);

uncompressed_hash = CityHash_v1_0_2::CityHash128WithSeed(

- reinterpret_cast(&stream_hash), sizeof(stream_hash), uncompressed_hash);

+ reinterpret_cast(&stream_hash), sizeof(stream_hash), uncompressed_hash);

}

checksums.files[data_file_name].is_compressed = true;

diff --git a/src/Storages/StorageDistributed.cpp b/src/Storages/StorageDistributed.cpp

index a7aeb11e2d8..f80e498efa8 100644

--- a/src/Storages/StorageDistributed.cpp

+++ b/src/Storages/StorageDistributed.cpp

@@ -691,7 +691,11 @@ QueryTreeNodePtr buildQueryTreeDistributed(SelectQueryInfo & query_info,

if (remote_storage_id.hasDatabase())

resolved_remote_storage_id = query_context->resolveStorageID(remote_storage_id);

- auto storage = std::make_shared(resolved_remote_storage_id, distributed_storage_snapshot->metadata->getColumns(), distributed_storage_snapshot->object_columns);

+ auto get_column_options = GetColumnsOptions(GetColumnsOptions::All).withExtendedObjects().withVirtuals();

+

+ auto column_names_and_types = distributed_storage_snapshot->getColumns(get_column_options);

+

+ auto storage = std::make_shared(resolved_remote_storage_id, ColumnsDescription{column_names_and_types});

auto table_node = std::make_shared(std::move(storage), query_context);

if (table_expression_modifiers)

diff --git a/src/Storages/StorageMergeTree.cpp b/src/Storages/StorageMergeTree.cpp

index ad9013d9f13..a22c1355015 100644

--- a/src/Storages/StorageMergeTree.cpp

+++ b/src/Storages/StorageMergeTree.cpp

@@ -1653,11 +1653,7 @@ struct FutureNewEmptyPart

MergeTreePartition partition;

std::string part_name;

- scope_guard tmp_dir_guard;

-

StorageMergeTree::MutableDataPartPtr data_part;

-

- std::string getDirName() const { return StorageMergeTree::EMPTY_PART_TMP_PREFIX + part_name; }

};

using FutureNewEmptyParts = std::vector;

@@ -1688,19 +1684,19 @@ FutureNewEmptyParts initCoverageWithNewEmptyParts(const DataPartsVector & old_pa

return future_parts;

}

-StorageMergeTree::MutableDataPartsVector createEmptyDataParts(MergeTreeData & data, FutureNewEmptyParts & future_parts, const MergeTreeTransactionPtr & txn)

+std::pair> createEmptyDataParts(

+ MergeTreeData & data, FutureNewEmptyParts & future_parts, const MergeTreeTransactionPtr & txn)

{

- StorageMergeTree::MutableDataPartsVector data_parts;

+ std::pair> data_parts;

for (auto & part: future_parts)

- data_parts.push_back(data.createEmptyPart(part.part_info, part.partition, part.part_name, txn));

+ {

+ auto [new_data_part, tmp_dir_holder] = data.createEmptyPart(part.part_info, part.partition, part.part_name, txn);

+ data_parts.first.emplace_back(std::move(new_data_part));

+ data_parts.second.emplace_back(std::move(tmp_dir_holder));

+ }

return data_parts;

}

-void captureTmpDirectoryHolders(MergeTreeData & data, FutureNewEmptyParts & future_parts)

-{

- for (auto & part : future_parts)

- part.tmp_dir_guard = data.getTemporaryPartDirectoryHolder(part.getDirName());

-}

void StorageMergeTree::renameAndCommitEmptyParts(MutableDataPartsVector & new_parts, Transaction & transaction)

{

@@ -1767,9 +1763,7 @@ void StorageMergeTree::truncate(const ASTPtr &, const StorageMetadataPtr &, Cont

fmt::join(getPartsNames(future_parts), ", "), fmt::join(getPartsNames(parts), ", "),

transaction.getTID());

- captureTmpDirectoryHolders(*this, future_parts);

-

- auto new_data_parts = createEmptyDataParts(*this, future_parts, txn);

+ auto [new_data_parts, tmp_dir_holders] = createEmptyDataParts(*this, future_parts, txn);

renameAndCommitEmptyParts(new_data_parts, transaction);

PartLog::addNewParts(query_context, PartLog::createPartLogEntries(new_data_parts, watch.elapsed(), profile_events_scope.getSnapshot()));

@@ -1828,9 +1822,7 @@ void StorageMergeTree::dropPart(const String & part_name, bool detach, ContextPt

fmt::join(getPartsNames(future_parts), ", "), fmt::join(getPartsNames({part}), ", "),

transaction.getTID());

- captureTmpDirectoryHolders(*this, future_parts);

-

- auto new_data_parts = createEmptyDataParts(*this, future_parts, txn);

+ auto [new_data_parts, tmp_dir_holders] = createEmptyDataParts(*this, future_parts, txn);

renameAndCommitEmptyParts(new_data_parts, transaction);

PartLog::addNewParts(query_context, PartLog::createPartLogEntries(new_data_parts, watch.elapsed(), profile_events_scope.getSnapshot()));

@@ -1914,9 +1906,8 @@ void StorageMergeTree::dropPartition(const ASTPtr & partition, bool detach, Cont

fmt::join(getPartsNames(future_parts), ", "), fmt::join(getPartsNames(parts), ", "),

transaction.getTID());

- captureTmpDirectoryHolders(*this, future_parts);

- auto new_data_parts = createEmptyDataParts(*this, future_parts, txn);

+ auto [new_data_parts, tmp_dir_holders] = createEmptyDataParts(*this, future_parts, txn);

renameAndCommitEmptyParts(new_data_parts, transaction);

PartLog::addNewParts(query_context, PartLog::createPartLogEntries(new_data_parts, watch.elapsed(), profile_events_scope.getSnapshot()));

diff --git a/src/Storages/StorageReplicatedMergeTree.cpp b/src/Storages/StorageReplicatedMergeTree.cpp

index 7fce373e26b..a1bf04c0ead 100644

--- a/src/Storages/StorageReplicatedMergeTree.cpp

+++ b/src/Storages/StorageReplicatedMergeTree.cpp

@@ -9509,7 +9509,7 @@ bool StorageReplicatedMergeTree::createEmptyPartInsteadOfLost(zkutil::ZooKeeperP

}

}

- MergeTreeData::MutableDataPartPtr new_data_part = createEmptyPart(new_part_info, partition, lost_part_name, NO_TRANSACTION_PTR);

+ auto [new_data_part, tmp_dir_holder] = createEmptyPart(new_part_info, partition, lost_part_name, NO_TRANSACTION_PTR);

new_data_part->setName(lost_part_name);

try

diff --git a/src/Storages/System/StorageSystemTables.cpp b/src/Storages/System/StorageSystemTables.cpp

index 60dfc3a75e8..715c98ee92a 100644

--- a/src/Storages/System/StorageSystemTables.cpp

+++ b/src/Storages/System/StorageSystemTables.cpp

@@ -108,6 +108,22 @@ static ColumnPtr getFilteredTables(const ASTPtr & query, const ColumnPtr & filte

return block.getByPosition(0).column;

}

+/// Avoid heavy operation on tables if we only queried columns that we can get without table object.

+/// Otherwise it will require table initialization for Lazy database.

+static bool needTable(const DatabasePtr & database, const Block & header)

+{

+ if (database->getEngineName() != "Lazy")

+ return true;

+

+ static const std::set columns_without_table = { "database", "name", "uuid", "metadata_modification_time" };

+ for (const auto & column : header.getColumnsWithTypeAndName())

+ {

+ if (columns_without_table.find(column.name) == columns_without_table.end())

+ return true;

+ }

+ return false;

+}

+

class TablesBlockSource : public ISource

{

@@ -266,6 +282,8 @@ protected:

if (!tables_it || !tables_it->isValid())

tables_it = database->getTablesIterator(context);

+ const bool need_table = needTable(database, getPort().getHeader());

+

for (; rows_count < max_block_size && tables_it->isValid(); tables_it->next())

{

auto table_name = tables_it->name();

@@ -275,23 +293,27 @@ protected:

if (check_access_for_tables && !access->isGranted(AccessType::SHOW_TABLES, database_name, table_name))

continue;

- StoragePtr table = tables_it->table();

- if (!table)

- // Table might have just been removed or detached for Lazy engine (see DatabaseLazy::tryGetTable())

- continue;

-

+ StoragePtr table = nullptr;

TableLockHolder lock;

- /// The only column that requires us to hold a shared lock is data_paths as rename might alter them (on ordinary tables)

- /// and it's not protected internally by other mutexes

- static const size_t DATA_PATHS_INDEX = 5;

- if (columns_mask[DATA_PATHS_INDEX])

+ if (need_table)

{

- lock = table->tryLockForShare(context->getCurrentQueryId(), context->getSettingsRef().lock_acquire_timeout);

- if (!lock)

- // Table was dropped while acquiring the lock, skipping table

+ table = tables_it->table();

+ if (!table)

+ // Table might have just been removed or detached for Lazy engine (see DatabaseLazy::tryGetTable())

continue;

- }

+ /// The only column that requires us to hold a shared lock is data_paths as rename might alter them (on ordinary tables)

+ /// and it's not protected internally by other mutexes

+ static const size_t DATA_PATHS_INDEX = 5;

+ if (columns_mask[DATA_PATHS_INDEX])

+ {

+ lock = table->tryLockForShare(context->getCurrentQueryId(),

+ context->getSettingsRef().lock_acquire_timeout);

+ if (!lock)

+ // Table was dropped while acquiring the lock, skipping table

+ continue;

+ }

+ }

++rows_count;

size_t src_index = 0;

@@ -308,6 +330,7 @@ protected:

if (columns_mask[src_index++])

{

+ chassert(table != nullptr);

res_columns[res_index++]->insert(table->getName());

}

@@ -397,7 +420,9 @@ protected:

else

src_index += 3;

- StorageMetadataPtr metadata_snapshot = table->getInMemoryMetadataPtr();

+ StorageMetadataPtr metadata_snapshot;

+ if (table)

+ metadata_snapshot = table->getInMemoryMetadataPtr();

ASTPtr expression_ptr;

if (columns_mask[src_index++])

@@ -434,7 +459,7 @@ protected:

if (columns_mask[src_index++])

{

- auto policy = table->getStoragePolicy();

+ auto policy = table ? table->getStoragePolicy() : nullptr;

if (policy)

res_columns[res_index++]->insert(policy->getName());

else

@@ -445,7 +470,7 @@ protected:

settings.select_sequential_consistency = 0;

if (columns_mask[src_index++])

{

- auto total_rows = table->totalRows(settings);

+ auto total_rows = table ? table->totalRows(settings) : std::nullopt;

if (total_rows)

res_columns[res_index++]->insert(*total_rows);

else

@@ -490,7 +515,7 @@ protected:

if (columns_mask[src_index++])

{

- auto lifetime_rows = table->lifetimeRows();

+ auto lifetime_rows = table ? table->lifetimeRows() : std::nullopt;

if (lifetime_rows)

res_columns[res_index++]->insert(*lifetime_rows);

else

@@ -499,7 +524,7 @@ protected:

if (columns_mask[src_index++])

{

- auto lifetime_bytes = table->lifetimeBytes();

+ auto lifetime_bytes = table ? table->lifetimeBytes() : std::nullopt;

if (lifetime_bytes)

res_columns[res_index++]->insert(*lifetime_bytes);

else

diff --git a/tests/analyzer_integration_broken_tests.txt b/tests/analyzer_integration_broken_tests.txt

index 68822fbf311..b485f3f60cc 100644

--- a/tests/analyzer_integration_broken_tests.txt

+++ b/tests/analyzer_integration_broken_tests.txt

@@ -96,22 +96,6 @@ test_executable_table_function/test.py::test_executable_function_input_python

test_settings_profile/test.py::test_show_profiles

test_sql_user_defined_functions_on_cluster/test.py::test_sql_user_defined_functions_on_cluster

test_postgresql_protocol/test.py::test_python_client

-test_quota/test.py::test_add_remove_interval

-test_quota/test.py::test_add_remove_quota

-test_quota/test.py::test_consumption_of_show_clusters

-test_quota/test.py::test_consumption_of_show_databases

-test_quota/test.py::test_consumption_of_show_privileges

-test_quota/test.py::test_consumption_of_show_processlist

-test_quota/test.py::test_consumption_of_show_tables

-test_quota/test.py::test_dcl_introspection

-test_quota/test.py::test_dcl_management

-test_quota/test.py::test_exceed_quota

-test_quota/test.py::test_query_inserts

-test_quota/test.py::test_quota_from_users_xml

-test_quota/test.py::test_reload_users_xml_by_timer

-test_quota/test.py::test_simpliest_quota

-test_quota/test.py::test_tracking_quota

-test_quota/test.py::test_users_xml_is_readonly

test_mysql_database_engine/test.py::test_mysql_ddl_for_mysql_database

test_profile_events_s3/test.py::test_profile_events

test_user_defined_object_persistence/test.py::test_persistence

@@ -121,22 +105,6 @@ test_select_access_rights/test_main.py::test_alias_columns

test_select_access_rights/test_main.py::test_select_count

test_select_access_rights/test_main.py::test_select_join

test_postgresql_protocol/test.py::test_python_client

-test_quota/test.py::test_add_remove_interval

-test_quota/test.py::test_add_remove_quota

-test_quota/test.py::test_consumption_of_show_clusters

-test_quota/test.py::test_consumption_of_show_databases

-test_quota/test.py::test_consumption_of_show_privileges

-test_quota/test.py::test_consumption_of_show_processlist

-test_quota/test.py::test_consumption_of_show_tables

-test_quota/test.py::test_dcl_introspection

-test_quota/test.py::test_dcl_management

-test_quota/test.py::test_exceed_quota

-test_quota/test.py::test_query_inserts

-test_quota/test.py::test_quota_from_users_xml

-test_quota/test.py::test_reload_users_xml_by_timer